THE ARCHITECTS OBSERVABILITY GAP: HOW GEMINI AND NOTEBOOKLM REBUILT MY RECOVERY

As a Software Architect, my career is built on the pillars of observability, data integrity, and system reliability. When I faced two major knee surgeries over the past year—including a complex meniscus revision—I realized that the modern medical experience has a massive observability gap. Patient data is siloed, imaging is interpreted with extreme caution, and “rehab protocols” are frequently just a stack of loose, disconnected PDFs.

During this journey, I didn’t just use AI; I integrated Google Gemini and NotebookLM into my primary tech stack for life. Here is how Google Cloud’s AI ecosystem became my recovery co-pilot.

Phase 1: Precision Diagnosis when the “Logs” were Unclear

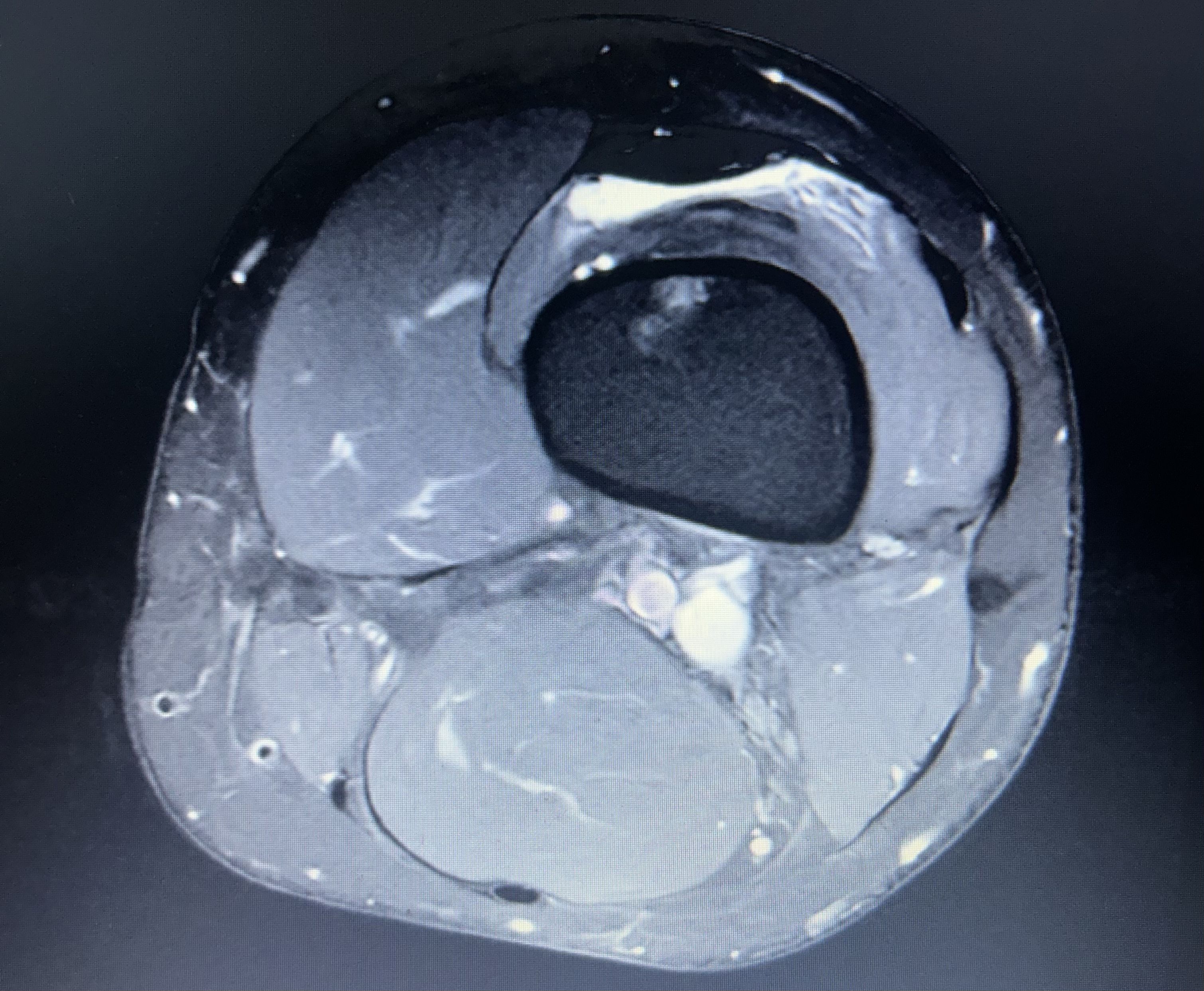

In the world of Site Reliability Engineering (SRE), if your logs are ambiguous, you look for better telemetry. In orthopedics, that telemetry is the MRI.

Before my first surgery, the clinical consensus was “wait and see.” The doctors were unclear on the extent of the damage, stating they wouldn’t know for sure until they were “inside the knee” on surgery day. As a former collegiate athlete and an engineer, “wait and see” wasn’t a satisfying roadmap.

I decided to upload my raw MRI images to Gemini. Leveraging its advanced multimodal reasoning, Gemini identified a meniscus root tear—a specific, high-stakes injury that requires a much different surgical approach than a standard trim.

The Result: When the surgeon finally performed the procedure, Gemini’s “prediction” was confirmed. Having this insight early allowed me to mentally and logistically prepare for a non-weight-bearing recovery, rather than being blindsided post-op. This was my “Aha!” moment: Gemini is now my primary LLM because it doesn’t just process text; it “sees” complex data with architectural precision.

Phase 2: Building the “Recovery Studio” with NotebookLM

Post-surgery, I was hit with a distributed systems problem: my data lived everywhere. I had operative reports in one portal, DEXA scans showing osteopenia in another, and dozens of physical therapy protocols in various PDF formats.

I used NotebookLM to create a private, grounded Recovery Studio. By uploading my entire medical history—test results, surgery notes, and rehab scripts—I transformed static documents into an interactive knowledge base.

Why this was a game-changer:

- Grounded Context: Unlike general AI, NotebookLM only answered based on my documents. I could ask, “Compare my week 4 progress against the specific meniscus root repair protocol,” and get a cited, accurate answer.

- Synthesis of Complex Data: I could upload new blood work and ask, “How do these Vitamin D levels impact my recovery plan given my osteopenia diagnosis?”

- Trend Analysis: It helped me track the “long game,” summarizing weeks of rehab notes to show me that, while Tuesday felt hard, the month-over-month trend was positive.

Phase 3: Scaling Human Potential with Google Cloud

This experience shifted my professional perspective. Using Google AI Studio to experiment with these models showed me that the future of healthcare—and software—is about empowering the end-user with agency.

As a Google Developer Expert, I’ve worked with many platforms, but the integration between Firebase for data, Gemini for reasoning, and the Google Cloud Console for scale is unmatched.

Final Thoughts

We often view AI as a tool for work, but it is a profoundly human tool for life. It helped me move from a “patient” (a passive recipient of care) to an “architect” of my own recovery.

If you are navigating a complex journey either technical or medical, don’t just rely on the defaults. Build your own studio. Trust the vision models.

Resources for Developers

- Build your own AI apps: Check out Google for Developers.

- Deepen your Web knowledge: Explore web.dev for building accessible health dashboards.

- Mobile-First Recovery: See how to integrate these tools on Android, with Flutter, or Angular.